There is no doubt that we can differentiate between multiple voices when they're spoken simultaneously. Under right circumstances, we're easily able to tune things out to focus on a single speaker, but a microphone picking up sound from multiple sources can't do the same thing. Speech recognition has been a thing only for the past decade, and it's capabilities are still limited. Researchers at Google have been working on isolating sources of audio like speech in videos, they've made great progress at it, judging by some of the results they've posted.

The researchers at Google have built a machine learning-powered system that can pick out specific sounds like speech in a video. Not only can it isolate spoken words from background audio but also entirely separate the speech of two people talking simultaneously. The concept seems easy enough when the two speakers have drastically different voices. If it's isolating audio based on frequency, the more significant the pitch difference between the speakers' voices, the better the results. The problem gets trickier when there are multiple sounds involved, along with background noise.

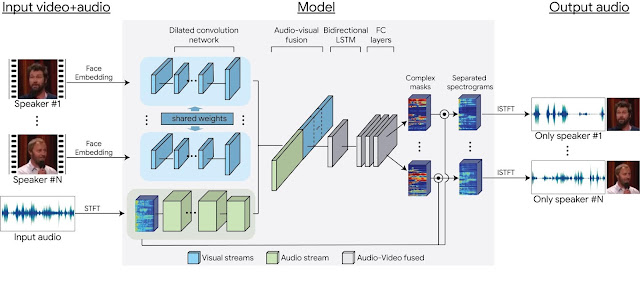

For that, the researchers at Google used "fake cocktail parties," composed of manually spliced "clean" sources of audio and video, overlaid with similarly clean background noise. The data is then fed to the network, training it with facial movements from the video and spectrograms of the merged audio track. The system is then able to determine which frequencies at which times are most likely to correspond to a given speaker and that data is then extracted into a new isolated audio track which is almost better than how a human would have gone about it.

While it sounds good from a scientific breakthrough point of view, the technology can spell doom for individual privacy rights. With a little work, it will be possible for anyone with the right equipment to isolate an individual voice from a crowd. But, then again, when has personal privacy ever been a hindrance to technology?

News Source: Google

Follow Wccftech on Google to get more of our news coverage in your feeds.